Community Moderation and How to Protect Your Discord

Community moderation isn't one-size-fits-all. By analyzing tens of thousands of ban reasons and security flags across our platform, we can see exactly how bad actors operate and how server admins fight back. We examined two core datasets from Communityone: general Web2 bans and highly targeted security flags based on our analytics, which heavily index giveaway and Web3 communities. While the tactics differ by community's incentives, the underlying behavior of bad actors shows striking similarities across the board.

Web2 Moderation: The Subjective Frontline

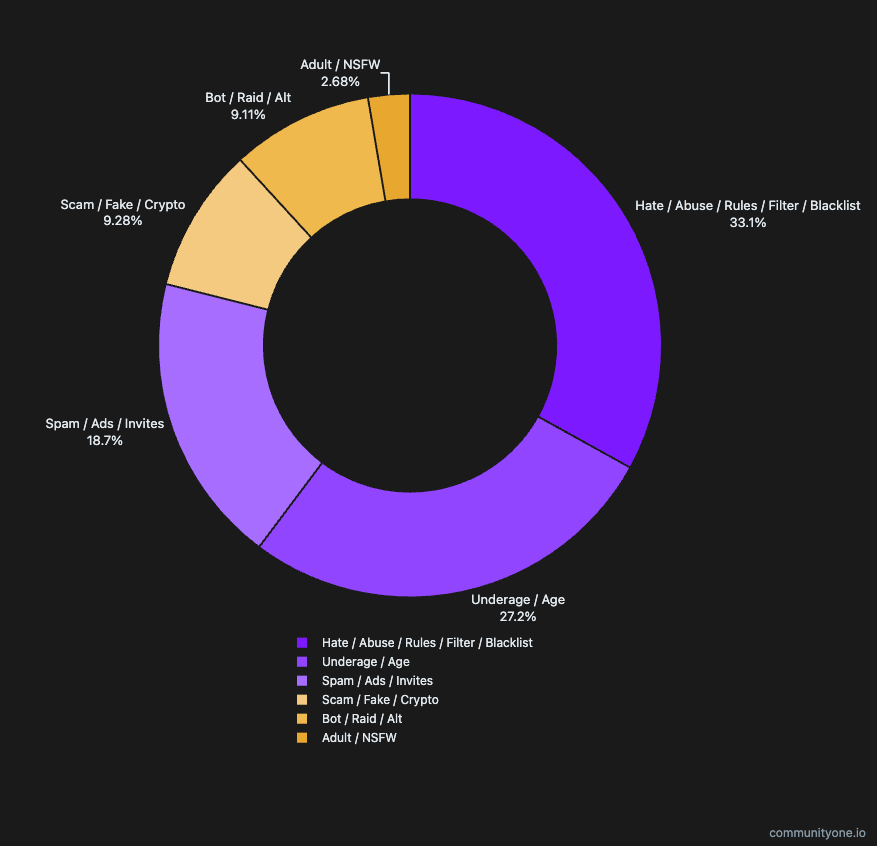

We classified thousands of free-text Discord ban reasons into standardized categories to understand what human moderators are actually fighting on a daily basis.

The "Young Account" Overlap (19.6% of bans)

While often labeled as "Underage / Age," a massive portion of these bans are actually triggered by AutoMod rules targeting very young account ages, not necessarily the physical age of the human behind the keyboard. Interestingly, this exact same "Burner Account" strategy is the primary weapon in Web3 and giveaway attacks—making these two seemingly different worlds highly similar in proportion and intent.

Spam vs. Scam—It Depends on Your Niche

On the surface, the data looks somewhat balanced between spammers (13.5%) and scammers (6.7%). However, the split is highly audience-dependent. If your server is related to money, crypto, or trading, you will see a disproportionately high number of scam- and trading-related bans. Regular, casual servers are more likely to be hit with simple "join my server" spam.

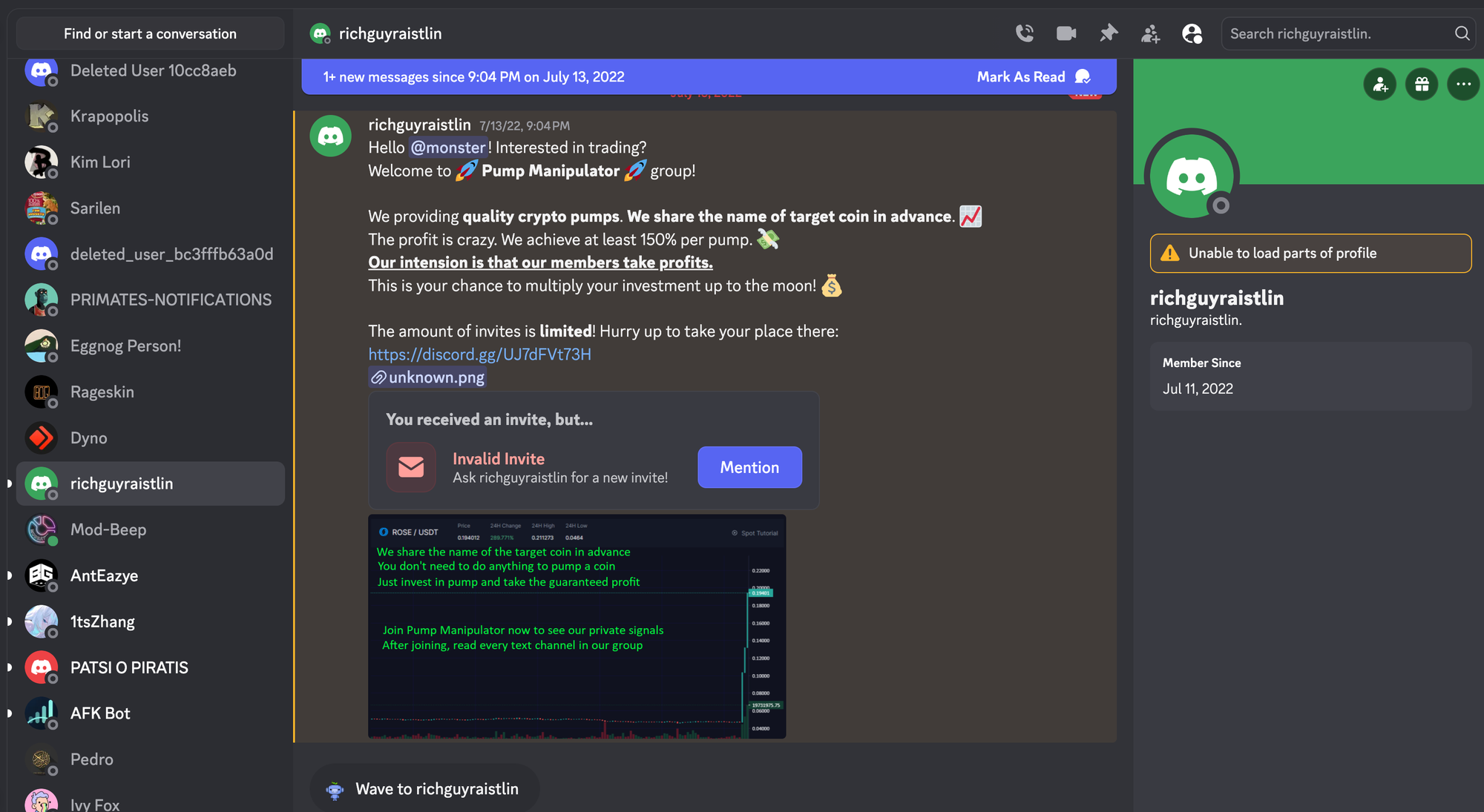

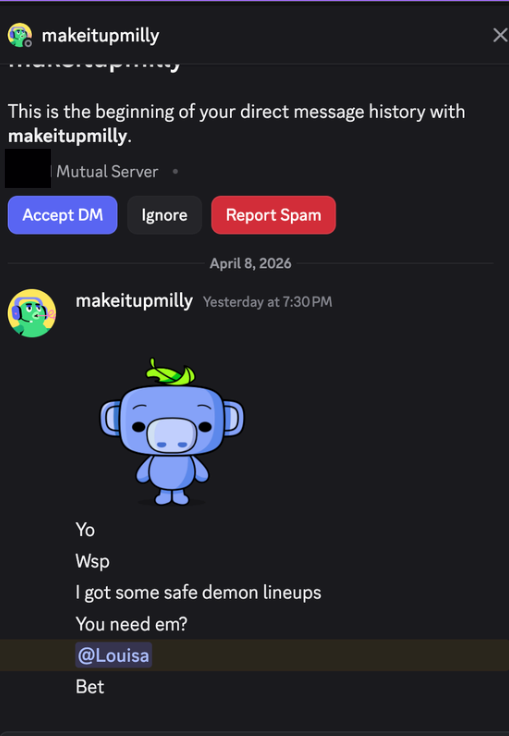

The Invisible "DM" Problem

A massive amount of abuse happens via Direct Messages. Because Discord lacks a standard, universal "DM Abuse" ban category, moderators naturally divide these bans into the "Spam" or "Scam" buckets based on the exact content of the message, obscuring the true scale of the DM problem in the data.

The Gray Area of Hate and Abuse (23.9% of bans)

This is the largest category, but it is also the most subjective. When reviewing individual ban reasons, enforcement varies wildly. Because the definition of "abuse" or "trolling" is highly community-dependent, this category is where you most often hear stories about "abusive mods" or unfair bans.

The Reality of NSFW and CP (1.9% of bans)

Despite widespread fear of CP on the platform, it accounts for a very small share of flagged accounts in our data. Discord handles these severe violations highly proactively at the platform level. In our data, servers are reported far more frequently for subjective abusive behavior than for CP-related issues.

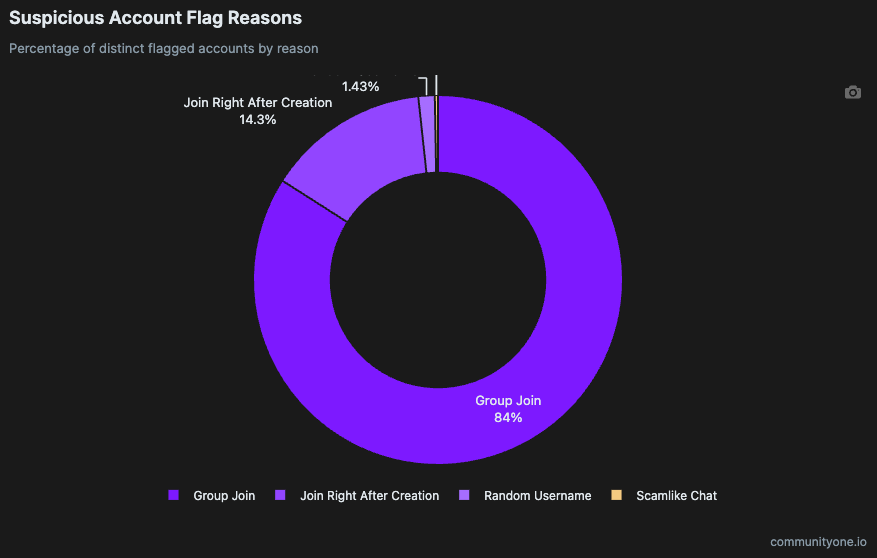

Web3 & Giveaway Servers: The Automated Invasion

It's Not Just Web3—It's the Freebies

While these patterns are famous in Web3, they aren't exclusive to it. Any Web2 server that runs heavily on giveaways and freebies will attract the exact same sophisticated, automated attacks. If you give away enough free stuff, your data will look exactly like a Web3 server's data.

The "Group Join" Phenomenon (84.0% of flags)

Attackers operate massive fleets of accounts. These aren't simple spam bots; they act like humans, they say "hi," and they engage normally in chat. Because their in-server behavior mimics regular users, standard chat filters won't catch them. The only reliable way to catch these sophisticated rings is by monitoring their highly unusual, coordinated join patterns. They travel in packs, and catching them at the door is your only real defense.

How to Protect Your Community

For Regular Web2 Communities

- Gate by Account Age: This is one of the easiest and most effective defenses. You can easily use bots like Dyno or Discord's native AutoMod to automatically kick or restrict accounts that were created within the last 24 to 72 hours.

- The Application Barrier: We are seeing more servers adopt an application-style entry process. If you make joining complicated enough, you eliminate a massive chunk of spam and scam accounts. However, this comes with a high cost to user acquisition and overall growth.

- Verification Tools Kill Raids: If you use strict verification tools like Wick, traditional Web2 raids are basically a thing of the past. Wick will automatically quarantine the raid accounts before they can cause damage.

- Post-Join Defenses (Report & Educate): Once a user is inside, there's a limit to automated defenses (especially for DMs). Have a dedicated, easy-to-use place to report spam and scams. Pin explicit rules about official team communication (e.g., list the exact Discord IDs of your team), and establish normal procedures clearly (e.g., "We will never DM you first").

For Giveaway-Heavy / Web3 Communities

- Setup Strict Entry Verification: Do not make entry easy. You need a strict verification gate right at the start.

- Stop Rewarding Trivial Tasks: Do not give away value for tasks that bots excel at (e.g., "Follow us on X" or "Send 5 messages in general chat").

- Focus on High-Friction, High-ROI Actions: Shift your reward structure to external, high-friction activities that actually impact your ROI directly, like signing up on your website, completing a KYC flow, or performing an on-chain action.

Conclusion

The intent of bad actors is always the same—extract value or cause disruption. Whether they are using a burner account to bypass a Web2 AutoMod or using a sophisticated bot-ring to farm a Web3 giveaway, understanding their patterns allows community builders to stay one step ahead.